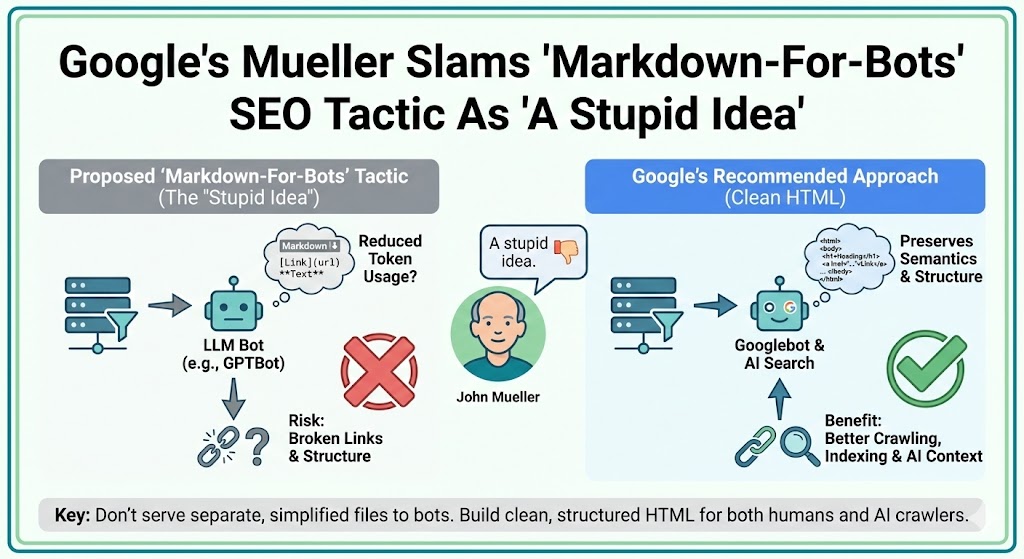

Google Questions Markdown Pages For LLM Crawlers

Google Search Advocate John Mueller pushed back on a growing idea in technical SEO circles: serving simplified Markdown files to AI crawlers instead of normal HTML pages.

The approach is meant to reduce token usage for large language models (LLMs) and make pages easier for AI systems to ingest. But Mueller made it clear he doesn’t see that strategy as helpful for Google Search, AI Overviews, or modern crawling systems.

His response was blunt.

On Bluesky, he described converting pages to Markdown specifically for bots as “a stupid idea.”

Where The Markdown‑For‑Bots Idea Came From

The discussion started when a developer explained they were using middleware to detect AI user agents like GPTBot or ClaudeBot. When those bots visited a page, the server delivered a raw Markdown version instead of the normal HTML.

The goal was efficiency.

By stripping out JavaScript, layouts, and navigation, they claimed token usage dropped by roughly 95%, theoretically allowing AI systems to process more of the site for retrieval‑augmented generation (RAG) use cases.

In practice, the bot receives what looks like a plain text document rather than a structured web page.

Mueller’s Technical Concerns About Markdown Crawling

Mueller questioned whether this works at all.

He asked whether LLM bots can even recognize Markdown as anything other than a basic text file. He also raised concerns about link discovery and crawl paths.

Key issues he pointed out include:

• Can bots parse Markdown links reliably? • Does internal linking survive when headers, footers, and navigation disappear? • Will crawlers treat it as HTML or just raw text? • Could removing structure hurt discoverability instead of helping?

In short, replacing semantic HTML with flat Markdown may remove the very signals search engines and AI systems depend on.

Why Clean HTML Still Matters For Googlebot And AI Search

Mueller’s comments follow a consistent pattern in Google’s guidance.

Googlebot and other crawlers are designed around HTML, not alternative formats created specifically for bots. Structured markup, headings, navigation, and internal links all help search engines understand hierarchy, context, and relationships between pages.

Flattening content into Markdown strips out much of that meaning.

From an SEO perspective, that can weaken:

• crawl budget efficiency • internal linking signals • document structure • entity relationships • accessibility and semantics

Those are the same signals used by Google Search, AI Overviews, and many LLM retrieval systems.

No Evidence Markdown Improves AI Visibility

There’s also no published proof that Markdown boosts AI citations or visibility.

Previous testing around llms.txt and bot‑specific formats found no measurable benefit for being cited in AI answers. Mueller has repeatedly compared those approaches to the deprecated keywords meta tag — technically possible, but unsupported and ineffective.

Without formal documentation from Google, OpenAI, Anthropic, or other platforms requesting Markdown pages, the tactic appears speculative.

What SEOs Should Do Instead

Mueller’s stance is straightforward: optimize the web version of your site, not a separate bot version.

For both classic SEO and AI search visibility, the safer path remains:

• clean, fast HTML • clear heading hierarchy • strong internal linking • semantic markup • structured data where supported • minimal JavaScript blocking content

These fundamentals improve crawling for Googlebot and make content easier for AI systems to extract at the fragment level.

The Bigger Picture For AI SEO

As AI Overviews and LLM‑powered search become more common, experiments like Markdown‑for‑bots are likely to continue. But unless platforms explicitly support alternative formats, creating bot‑only versions risks adding complexity without benefit.

For now, Mueller’s message is simple: build for the web, not for hypothetical bots.

If AI systems need your content, they’ll fetch the same structured HTML everyone else does.