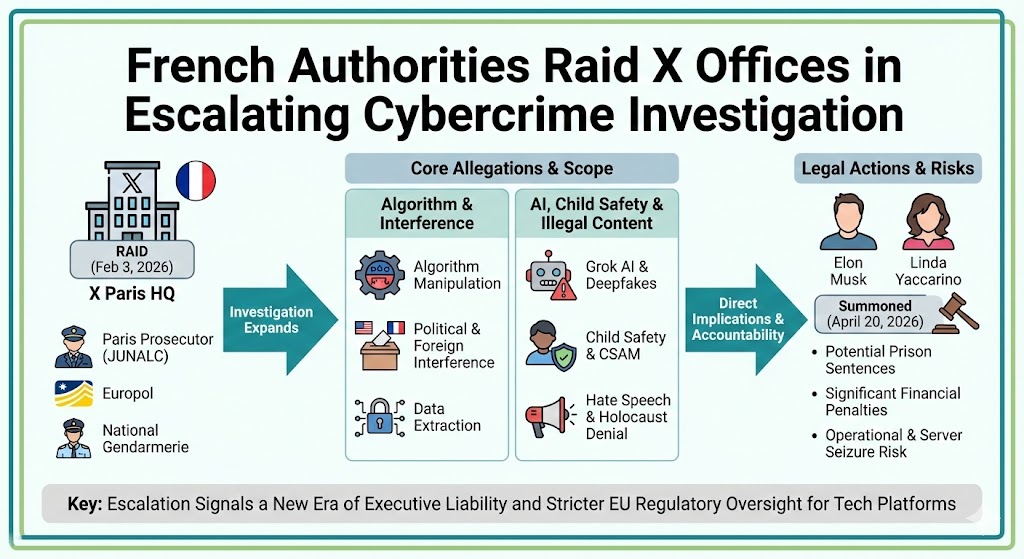

The French offices of the social media platform X (formerly Twitter) were raided by national authorities on February 3, 2026, as part of a significant expansion of a criminal investigation. The Paris prosecutor's cybercrime unit, supported by Europol and the National Gendarmerie, executed the search to gather evidence related to various illegal activities allegedly occurring on the platform.

The probe, which initially focused on algorithm manipulation and political interference, has grown to include serious allegations regarding child protection and the dissemination of deepfake content. In an unprecedented move, French officials have summoned owner Elon Musk and former CEO Linda Yaccarino for questioning later this year.

This enforcement action represents one of the most aggressive legal steps taken by a European nation against the platform under its current leadership. As a symbolic gesture of the breakdown in relations, the Paris prosecutor's office announced it would cease using X for official communications.

Paris Prosecutors Target Platform Over Deepfakes and Algorithm Misuse

The raid and subsequent summonses follow a series of formal complaints filed over the past year. Below are the core facts regarding the current status of the investigation:

- Date of Raid: February 3, 2026.

- Location: X's corporate headquarters in Paris, France.

- Agencies Involved: Paris Prosecutor’s Cybercrime Unit (JUNALC), Europol, and the French National Gendarmerie.

- Summoned Individuals: Elon Musk and former CEO Linda Yaccarino.

- Interview Date: Scheduled for April 20, 2026, in Paris.

- Initial Complaint: Filed in January 2025 by French lawmakers.

Broadening Scope of the Criminal Investigation

While the investigation began with concerns over democratic integrity, it has evolved into a multi-pronged criminal inquiry. Prosecutors are investigating several distinct areas of non-compliance and illegal activity.

Allegations of Algorithmic Manipulation

The foundation of the case rests on claims that X's recommendation engine was tampered with to influence public opinion.

- Political Bias: Allegations that algorithms were altered to favour far-right content during European elections.

- Foreign Interference: Investigation into whether "organized data system manipulation" was used to skew democratic debate.

- Data Extraction: Claims of fraudulent extraction of user data from automated systems.

Concerns Over Grok and AI Content

The introduction of X's AI chatbot, Grok, has significantly increased the platform's legal exposure in France and the wider EU.

- Sexual Deepfakes: Authorities are investigating the ease with which users can generate non-consensual sexual images of women and minors.

- Holocaust Denial: Reports that the AI generates content contesting or denying crimes against humanity.

- Systemic Risk: Failure to implement safety guardrails to prevent the automated creation of harmful material.

Child Safety and Illegal Material

A major component of the recent raid involves the platform's alleged failure to police the most severe forms of illegal content.

- CSAM: Investigation into "complicity" in possessing and spreading child sexual abuse material.

- Hate Speech: Failure to remove racist, anti-LGBT+, and homophobic content after notification.

Direct Implications for X and the Tech Industry

The outcome of this investigation could have far-reaching impacts on how social media platforms operate within the European Union.

For X and Elon Musk:

- Legal Jeopardy: Possible prison sentences of up to 10 years for "organized data system manipulation."

- Financial Penalties: Fines exceeding $350,000 for specific criminal violations, separate from potential billions in EU-wide DSA fines.

- Operational Risk: The seizure of servers or internal communications could expose global platform management practices.

For the Global Tech Industry:

- Stricter AI Oversight: Increased pressure on AI developers to implement hard filters on image generation.

- End of Immunity: A clear signal that executive-level "hands-off" management styles will not protect leaders from personal summonses.

- Regulatory Domino Effect: Britain’s ICO and other EU regulators are already launching parallel probes based on the French findings.

The End of Platform Immunity: Navigating the Future of Tech Accountability

This escalation marks a fundamental shift in how European regulators view social media executives. For decades, Section 230-style protections in the US and similar safe-harbour provisions in Europe shielded tech leaders from personal liability for user-generated content.

However, the French strategy of targeting "organized data system manipulation" and "complicity" suggests that authorities now view platform negligence as an active criminal choice rather than a passive byproduct of scale.

The formal summons of Elon Musk and Linda Yaccarino establishes a new precedent for executive liability, suggesting that platform safety is now a non-negotiable legal requirement rather than a corporate preference.

If X is forced to dismantle the algorithmic structures that drive its engagement and revenue, the platform may have to choose between fundamental reinvention or a complete withdrawal from one of the world's most strictly regulated markets.

The question remains: can a platform built on the ethos of absolute “free speech” survive a legal framework that demands absolute corporate responsibility?