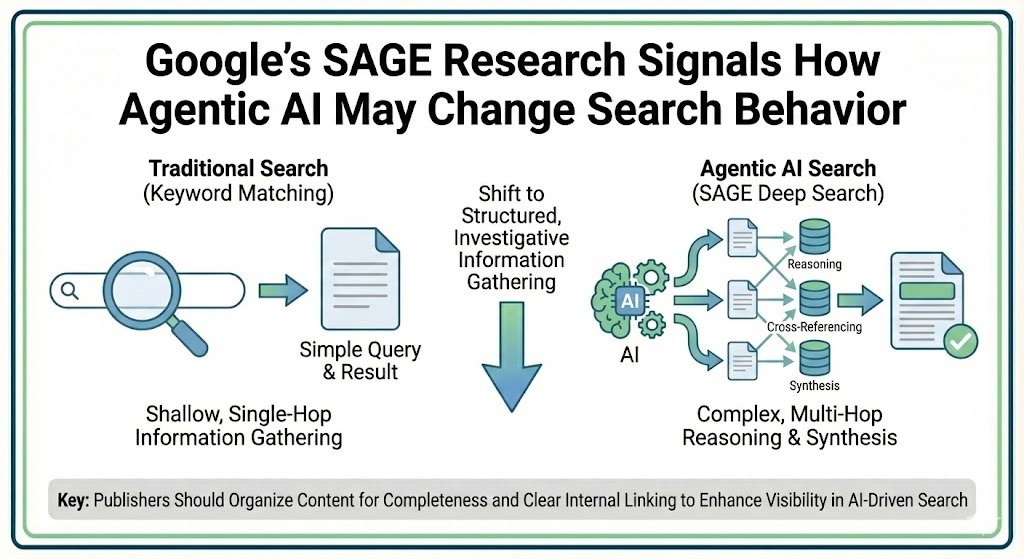

Google has released new research outlining how it trains AI agents to perform complex, multi-step search tasks. The project, called SAGE (Steerable Agentic Data Generation for Deep Search with Execution Feedback), focuses on building datasets that better reflect how “deep research” queries unfold in the real world.

While the paper is framed as a technical contribution to AI training, it also offers practical signals for how search behavior may evolve as agentic systems take on more investigative and synthesis-heavy tasks.

In short, the way AI agents gather information increasingly resembles structured, multi-hop search rather than simple keyword matching. That shift has implications for how publishers organize and present content.

Training AI to Think in Steps

Earlier AI search benchmarks typically required only one to three queries to find an answer. According to Google’s researchers, those datasets did not reflect the complexity of real-world research tasks, where users often need multiple searches, cross-referencing, and reasoning across documents.

SAGE was designed to close that gap.

The system uses two coordinated agents. One generates intentionally difficult questions that require several reasoning steps. The second attempts to solve them through search. If the answer is found too easily, the system analyzes how that shortcut occurred and feeds the information back into the dataset.

The result is a collection of question-and-answer pairs that force deeper exploration instead of single-query wins.

Where “Deep” Search Breaks Down

During testing, Google identified several patterns that allowed the search agent to bypass multi-step reasoning entirely. In many cases, answers could be found faster than expected because of how information was structured on the web.

One common pattern occurred when multiple pieces of required information appeared in the same document. Instead of moving between sources, the agent solved everything in one place.

Another pattern involved queries that returned comprehensive results spanning several needs at once, effectively collapsing what should have been a multi-step investigation into a single query.

Other examples included overly detailed questions that surfaced exact answers immediately or questions that appeared complex but were trivial for search systems to resolve.

Although these shortcuts were considered “failures” for training deep-reasoning agents, they mirror what happens during real-world search. When a page answers everything clearly and directly, there is little reason to keep looking elsewhere.

What This Suggests for SEO

For marketers and publishers, these findings reinforce a familiar but increasingly important principle: completeness matters.

When key facts, explanations, and related context are consolidated into one coherent resource, both users and automated systems can resolve questions more efficiently. That reduces the need for additional hops across competing sites.

Comprehensive coverage does not mean cramming everything onto one page. Instead, it means structuring content so that related answers are easy to discover, either within the same document or through clear internal linking.

Google’s experiments also relied heavily on traditional search rankings to source information. In many cases, the agents pulled from the highest-ranking pages first, suggesting that classic visibility remains foundational even as AI layers evolve.

Classic Search Still Matters

Despite the focus on agentic AI, the mechanics described in the research rely on conventional search infrastructure. Agents still query search engines, retrieve results, and synthesize what they find.

That means standard SEO fundamentals—ranking well, being authoritative, and covering topics thoroughly—continue to influence whether content is surfaced.

Rather than optimizing specifically for “AI search,” the more practical approach is ensuring that pages are clear, structured, and comprehensive enough to serve as reliable sources when systems aggregate information.

The Bigger Picture

SAGE does not introduce a new ranking factor or immediate change to how search results work. Instead, it provides a glimpse into how future AI systems may evaluate and combine information across the web.

As search becomes more agent-driven, the pages that surface most often are likely to be those that resolve questions quickly, reduce ambiguity, and minimize the need for additional queries.

For publishers, that translates to a straightforward strategy: organize information cleanly, answer related questions together, and remain competitive in traditional rankings. The better a page functions as a complete resource, the more useful it becomes to both people and machines.