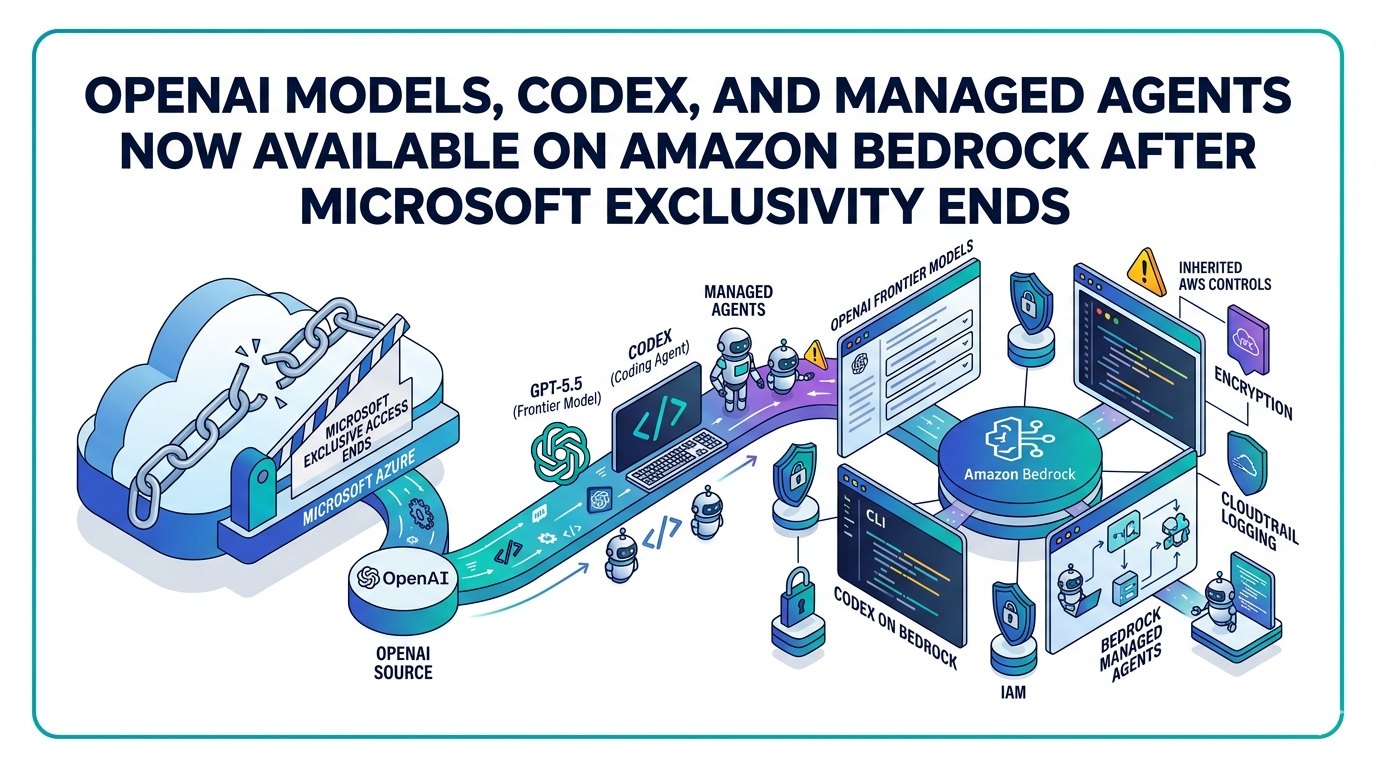

AWS enterprise customers can now access OpenAI's flagship AI models, its coding agent Codex, and a new agent-building service directly inside Amazon Bedrock, without routing through Microsoft's Azure cloud. OpenAI and Amazon Web Services confirmed the expansion in simultaneous announcements published April 29, 2026, one day after Microsoft and OpenAI disclosed an amended partnership that ended Azure's status as the exclusive third-party cloud provider for OpenAI's products.

The Microsoft Exclusivity That Blocked the Deal

For years, OpenAI's commercial agreement with Microsoft prevented other cloud providers from distributing its API-accessible products. Microsoft retained exclusive rights to any OpenAI product accessed through an API, while it had previously agreed to let OpenAI run only select consumer products, such as ChatGPT, on other clouds. That arrangement became a direct obstacle when, in February 2026, Amazon and OpenAI announced a major strategic partnership in which Amazon agreed to invest up to $50 billion in OpenAI and committed AWS as the exclusive third-party cloud distribution provider for OpenAI's enterprise platform, Frontier, while OpenAI simultaneously expanded its existing $38 billion AWS infrastructure agreement by $100 billion over eight years.

The Financial Times reported that Microsoft contemplated legal action to enforce its contract terms. The renegotiated agreement, announced April 28, eliminated Microsoft's exclusive rights and resolved that legal exposure. Under the revised terms, Microsoft will retain a license to OpenAI's intellectual property for models and products through 2032, but that license is now non-exclusive, and Microsoft will no longer pay a revenue share to OpenAI.

Three Products Now Live on Bedrock in Limited Preview

AWS announced a major expansion of its partnership with OpenAI, bringing three new offerings, all in limited preview: OpenAI models on Amazon Bedrock, available through the same Bedrock APIs and controls customers already use.

The launch includes GPT-5.5, OpenAI's current frontier model, now accessible on Amazon Bedrock. For the first time, AWS customers can access OpenAI frontier models through the services they already use for model access, fine-tuning, and orchestration, and can evaluate and deploy OpenAI models alongside models from Anthropic, Meta, Mistral, Cohere, and Amazon through a single, consistent service.

The second component is Codex on Amazon Bedrock. More than 4 million people currently use Codex every week, deploying it across the software development lifecycle to write code, explain systems, refactor applications, generate tests, and modernize legacy codebases. Organizations can now power Codex with OpenAI models served directly from Amazon Bedrock by configuring Codex to use Bedrock as the provider, giving customers enterprise-grade security, billing, and high availability. All customer data is processed by Amazon Bedrock, and eligible customers can apply Codex usage toward their AWS cloud commitments. Access is available through the Bedrock API, starting with Codex CLI, the Codex desktop app, and the Visual Studio Code extension.

The third product is Amazon Bedrock Managed Agents, powered by OpenAI. With Bedrock Managed Agents, organizations can build agents that maintain context, execute multi-step workflows, use tools, and take action across complex business processes. The service is specifically designed to use OpenAI's reasoning models, offering features such as agent steering and security controls.

Enterprise Security and Procurement Controls Carry Over

A central aspect of the announcement is that OpenAI's models, when accessed through Bedrock, inherit AWS's existing governance infrastructure without requiring additional configuration. OpenAI models on Bedrock inherit the full set of enterprise controls customers already depend on, including IAM-based access management, AWS PrivateLink connectivity, guardrails, encryption at rest and in transit, comprehensive logging through AWS CloudTrail, and integration with existing compliance frameworks, with no additional infrastructure to configure and no new security model to learn.

Customers can apply OpenAI model usage toward their existing AWS cloud commitments and consolidate AI spend alongside broader AWS workloads, simplifying procurement and financial governance for organizations already managing significant cloud investments on AWS.

AWS CEO Comments at San Francisco Launch Event

AWS CEO Matt Garman, speaking at a launch event in San Francisco, said: "This is what our customers have been asking us for for a really long time." OpenAI CEO Sam Altman, who was unable to attend in person, sent a recorded message, citing a court appearance in Oakland related to his legal case against Elon Musk.

What This Means for Enterprise Martech and AI Procurement

For enterprise marketing and technology teams already operating within AWS environments, the practical change is one of procurement consolidation. Teams that previously had to maintain separate Azure relationships specifically to access OpenAI's API-connected products, such as GPT models used in content generation, automation pipelines, or agentic workflows, can now access those same capabilities through existing Bedrock accounts, security credentials, and AWS billing agreements. Companies that had turned to Microsoft specifically because Azure was the only cloud offering OpenAI's models no longer face that constraint, and AWS customers can now access OpenAI on infrastructure they already use, with the security configurations and compliance certifications already in place. The shift does not automatically migrate existing integrations, but it removes the primary structural barrier to building OpenAI-powered martech directly on AWS.

General Availability Expected Within Weeks

AWS customers can currently experiment with OpenAI's models and Codex through Amazon Bedrock, with general availability expected in the next few weeks. AWS will also serve as the exclusive third-party cloud distribution provider for OpenAI Frontier, the company's most advanced enterprise platform for deploying and managing teams of AI agents across business systems.