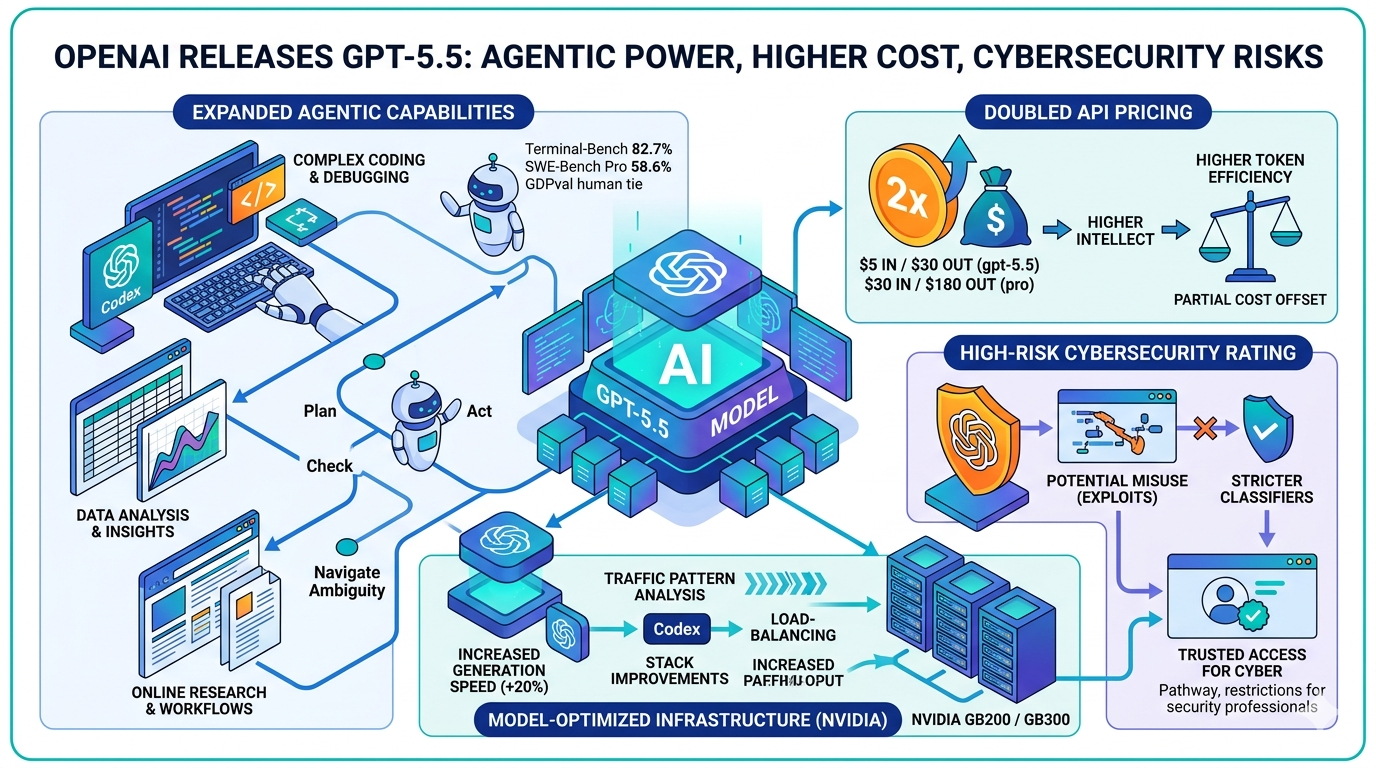

OpenAI's paid ChatGPT and Codex subscribers gained access to a new frontier model on April 23, 2026. OpenAI released GPT-5.5, describing it as its "smartest and most intuitive to use model yet" and the next step toward a new way of getting work done on a computer. The launch arrives seven weeks after GPT-5.4 and carries API pricing double that of its predecessor.

What GPT-5.5 Is Designed to Do

According to OpenAI's April 23 announcement, the model excels at writing and debugging code, researching online, analyzing data, creating documents and spreadsheets, and operating software. OpenAI says users can give GPT-5.5 a complex, multi-part task and trust it to plan, use tools, check its work, navigate ambiguity, and keep going.

OpenAI stated that performance gains are especially strong in agentic coding, computer use, knowledge work, and early scientific research, areas where progress depends on reasoning across context and taking action over time.

Across these domains, OpenAI stated in its April 23 blog post that GPT-5.5 is not just more intelligent but more efficient in how it works through problems, often reaching higher-quality outputs with fewer tokens and fewer retries.

Benchmark Performance Against Competing Models

On coding benchmarks, GPT-5.5 matched GPT-5.4's per-token latency while improving performance, scoring 82.7% on Terminal-Bench 2.0 compared to GPT-5.4's 75.1%. On SWE-Bench Pro, GPT-5.5 reached 58.6%, with Anthropic's Claude Opus 4.7 still leading that benchmark at 64.3%.

On knowledge-work tasks, OpenAI claims GPT-5.5 exhibits state-of-the-art performance on GDPval, a benchmark measuring AI performance across 44 occupations. According to the company's official blog, GPT-5.5 outperformed or tied human workers on approximately 85 percent of benchmarked tasks, compared to Anthropic's Opus 4.7 at 80 percent and GPT-5.4 at 83 percent.

OpenAI also noted in its April 23 post that GPT-5.5 shows a clear improvement over GPT-5.4 on GeneBench, a new evaluation focused on multi-stage scientific data analysis in genetics and quantitative biology.

Infrastructure: Model-Assisted Optimization on NVIDIA Hardware

OpenAI stated that serving GPT-5.5 at GPT-5.4 latency required rethinking inference as an integrated system. GPT-5.5 was co-designed for, trained with, and served on NVIDIA GB200 and GB300 NVL72 systems.

A notable aspect of the infrastructure work is that the model itself contributed to its own serving stack. OpenAI said Codex analyzed weeks of production traffic patterns and helped produce new load-balancing and partitioning heuristics that increased token generation speeds by more than 20%. GPT-5.5 also helped find and implement key improvements in the stack itself. OpenAI stated plainly that the model helped improve the infrastructure that serves it.

Cybersecurity Classification and New Access Controls

According to OpenAI's GPT-5.5 System Card, the company is treating GPT-5.5 as High capability in the Cybersecurity domain, but below Critical. OpenAI stated that its cybersecurity safeguards have increased for this launch, reflecting GPT-5.5's increased capabilities in this domain.

The distinction between High and Critical matters for how the model is deployed. While GPT-5.5 demonstrates increased cybersecurity capabilities compared to GPT-5.4, the System Card notes the model does not have the capability to develop functional zero-day exploits of all severity levels. Under OpenAI's Preparedness Framework, a Critical cybersecurity rating is defined as a model that can identify and develop functional zero-day exploits of all severity levels in many hardened real-world critical systems without human intervention.

OpenAI stated it is releasing GPT-5.5 with its strongest set of safeguards to date, designed to reduce misuse while preserving access for beneficial work. The company evaluated the model across its full suite of safety and preparedness frameworks, worked with internal and external red teamers, added targeted testing for advanced cybersecurity and biology capabilities, and collected feedback from nearly 200 trusted early-access partners before release.

OpenAI acknowledged in its April 23 announcement that GPT-5.5 is deploying stricter classifiers for potential cyber risk, which some users may find annoying initially, as the company tunes them over time.

For security professionals requiring broader access, OpenAI stated it is making cyber-permissive models available through a Trusted Access for Cyber program, starting with Codex, which includes expanded access to the advanced cybersecurity capabilities of GPT-5.5 with fewer restrictions for verified users meeting certain trust signals at launch. Users can apply for trusted access at chatgpt.com/cyber to reduce unnecessary refusals while using GPT-5.5 for verified defensive work.

Availability and Pricing

As of April 23, GPT-5.5 is rolling out to Plus, Pro, Business, and Enterprise users in ChatGPT and Codex, while GPT-5.5 Pro is rolling out to Pro, Business, and Enterprise users in ChatGPT. OpenAI stated that API deployments require different safeguards and that it is working closely with partners and customers on the safety and security requirements for serving at scale, with GPT-5.5 and GPT-5.5 Pro coming to the API very soon.

In Codex, GPT-5.5 is available for Plus, Pro, Business, Enterprise, Edu, and Go plans with a 400K context window. A Fast mode is also available, generating tokens 1.5x faster for 2.5x the cost.

For API developers, gpt-5.5 will be available in the Responses and Chat Completions APIs at $5 per 1M input tokens and $30 per 1M output tokens, with a 1M context window. Batch and Flex pricing are available at half the standard API rate, while Priority processing is available at 2.5x the standard rate. OpenAI also confirmed it will release gpt-5.5-pro in the API, priced at $30 per 1M input tokens and $180 per 1M output tokens.

OpenAI acknowledged in its April 23 post that GPT-5.5 is priced higher than GPT-5.4, stating it is both more intelligent and more token efficient. In Codex, OpenAI said it has tuned the experience so GPT-5.5 delivers better results with fewer tokens than GPT-5.4 for most users.

Practical Implications for Marketers, Developers, and Enterprise Teams

For teams using Codex or the ChatGPT API for content production, data analysis, or automated workflows, the token efficiency gains OpenAI describes could partially offset the doubled per-token API price, though that offset will depend heavily on task type and token consumption per session. Organizations handling high-volume API workloads will need to model their actual cost impact before the API goes live. Security and compliance teams should note that the High cybersecurity rating under OpenAI's Preparedness Framework has triggered stricter automated classifiers, which may affect legitimate security-adjacent use cases until OpenAI refines them. Enterprises seeking expanded cybersecurity capabilities should review the Trusted Access for Cyber program's verification requirements before applying.

OpenAI co-founder and president Greg Brockman, speaking to journalists on April 23, called GPT-5.5 a big advancement "towards more agentic and intuitive computing," and said it represents one step toward OpenAI's longer-term goal of a unified "super app."