There is presently a subtle, yet visible diversification in the role of programmers from “people who write code” to that of being lead facilitators and contributors of better SERP ranking. Their contribution has now become very much central to the overall success of any SEO strategy. What I would like to do in this post is highlight how programmers can contribute substantially to the overall commercial success of every website that they develop.

SEO Strategies for Better SERP

SEO strategies that help generate a surge in SERP rankings and that which every programmer can follow successfully are given below. It should be mentioned that focus here is on “dynamic content” and not static content.

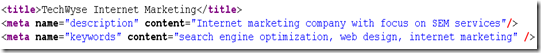

1. Meta Titles, Descriptions & Keywords

Meta Titles, Description & Keywords attain increased significance in the search engine algorithm and as such are a force in the overall ranking and indexing of pages.

For all dynamic pages Meta tags should also be dynamic as seen in: https://www.techwyse.com/blog/

You may click on any of the posts. If you take the view source of the topic detail page, it clearly shows that the Meta title is the same as the title of the topic and Meta description

contains the first few characters of the topic description. In this case, even though the same page is processed the Meta tags will change dynamically according to the post. An option in the admin can also be provided so that the title and description will appear as we wish for each record. For this the two additional fields need to be added in the admin.

On another note; if you are looking for a great piece of blog software that is SE friendly, we highly recommend WordPress.

2. Search Engine Friendly URLS

Search Engine Friendly URLS help search engines crawl web pages much faster. As search engines index pages based on the URL, the possibility of friendly URLs getting indexed faster are indeed brighter. https://www.techwyse.com/blog/internet-marketing/microsoft-launches-bing-search/ Here internet-marketing is a category and “Microsoft launches Bing search” is the title of the post and comes under the category – “internet-marketing”. URLs based particularly on categories prove much more effective in SEO strategy.

Programmers should ensure that the URL generated is valid.

Using .htaccess, and apache’s mod rewrite rules can help to achieve SEO friendly URLS

It should be noted at this time that if we are going for friendly URLS, no relative paths will work. All links, whether images, css or js, should be absolute. Click here to learn more about absolute & relative paths.

3. Alt and Title Tags

Programmers must also make sure that proper “alt tags” are given for images and “title tags” for all links. For better relevance, a separate field needs to be given while uploading an image so that such a field can be used to display appropriate tag. Another option is to give the proper name for the image so that every field can be avoided. But I would prefer the former method because the latter method poses the risk of image overwriting if the same image names are given.

4. Page Loading

It is a known fact that search engine bots will crawl faster, pages that load more quickly and that which are better optimized, than pages that take longer to load, and that come without any page optimization.

a) Image optimization

Image optimization should also be given importance and images need to be optimized properly. Always create thumb images if a smaller version of a big image needs to be shown. Personally, I have seen many sites make the images smaller by controlling the image width and height attributes. This is the wrong method and should NOT be followed.

b) Cache

If your site needs to load massive data from your database or uses a web service, it may happen that the pages will load rather slowly due to the excess server requests. In this case, the use of cache files is a preferred method.

Cache is a method by which the contents from the database can be taken and written to a file in frequent intervals (which can be controlled by a programmer) and the interval depends on how frequent the contents are updated. The cache file needs to be updated only once a day if the contents are changed only once. This cache file is shown to the user when a request is made. In other words, this file will look as if we took the 'view source' of the dynamic pages.

Use of cache technology reduces the load time tremendously and gives “relax time” to the server. If you want to do crawl tests for your website, do it here.

5. JavaScript, Ajax and iframes

These are NOT SEO friendly and I would suggest minimizing the use of Java Script and iFrames. I would advise to opt for server side validation rather than JavaScript. In dynamic pages, avoid using Ajax to fetch records as it’s not considered SEO-friendly because bots cannot read the contents generated by Ajax. Remember that Ajax is JavaScript plus PHP and bots will avoid java scripts. Ajax can be used for loading things like image galleries, checking login credentials etc. Just don't use it for content related areas.

So there you have it. My own SEO guide dedicated directly to my fellow programmers! If you found this helpful or have any questions please make sure to write a note below and I will make every effort to respond quickly to you!

on

Bravo! Excellent & Informative post.

Probably a logical list of ‘to dos’ for programmers in fulfilling SEO requirements of every website.

Along with aesthetic and programming consistency, designers & programmers should also focus on the reason for creating the site-for business leads! which can be catapulted only by SEO. Failing to comply with such SEO needs will create road blocks in the site’s path to prosperity.

on

Till recently programmers were considered as second tier in SEO strategy and their contributions were mostly glossed over. But this post surely is a wake up call to the programmers and makes them feel that they are very much central to any SEO success. It further educates them on the ways and means of making this possible. Really an educative and informative post to be sure

on

nice article! definitely its a guide for programmers to create search engine friendly websites.