Google continuously works to enhance their algorithm to index content around the web. Since the content that is delivered by Ajax doesn’t generate different pages (URLs), it is meant to be non-seo friendly. The issue with that is more and more of the best content is being generated by Ajax. Google now realizes this and has finally announced how you can index your Ajax content.

Google continuously works to enhance their algorithm to index content around the web. Since the content that is delivered by Ajax doesn’t generate different pages (URLs), it is meant to be non-seo friendly. The issue with that is more and more of the best content is being generated by Ajax. Google now realizes this and has finally announced how you can index your Ajax content.

Slightly modify the URL fragments for AJAX pages

Developers use “hash” tags (#) as a part of URLs in anchor texts to distinguish the content from the rest of the website. It's great for users, but search engine spiders usually cannot understand it. For Google to crawl your Ajax content, they require hash (#) and exclamation point (!) which is together named as “Hashbang”(#!). See how it is implemented in example.

Allow search engine crawlers to access these URLs by escaping the state

To allow Google to index your Ajax content, you must use the hashbang in the URL, which will be interpreted by Google in a unique manner - they'll take everything after the hashbang, and pass it to the site as a URL parameter instead. The name they use for the parameter is: _escaped_fragment_

Google will then rewrite the URL, and request content from that static page. To show what the rewritten URLs look like, here are some examples:

http://www.wyselabs.com/ajax-test/#!Kid

becomes

http://www.wyselabs.com/ajax-test/?_escaped_fragment=Kid

Google will list the new URLs in SERP (without hash or hashbang).

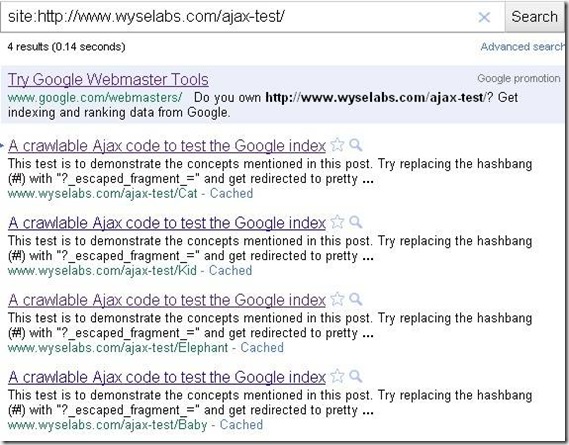

We at TechWyse are always researching and testing new tools. We have successfully completed our Google Ajax index test!

Below is the outcome to show how our Ajax content is crawlable. A single paged Ajax website has been indexed as four pages in Google.

As this concept is still in Beta, there are a few things to keep in mind before you decide to program your entire website in Ajax:

1) Only the Google algorithm can understand and cache as different pages whereas other search engines currently do not.

2) The page load time is increased because of the Ajax scripts

3) Unique Meta tags cannot be implemented for the pages that contain Ajax content, so you will be losing weightage in many other search engines.

on

Hi Elan, more than a year of this publication know, but what I know is that link you comment on how to configure the server. Can not find it, where is it? I hope you can tell me, because I have problems with my site _escaped_fragment_ (http://goo.gl/OgSZ9).

Sorry for my English. thanks

on

Hello Ricki, thanks for reading. Follow the below link to know how to set up your server to handle requests for URLs that contain _escaped_fragment_

on

Elan, How did you manage to redirect ?_escaped_fragment_= to a pretty url? with my site it redirects to the version of the url still with escaped fragment showing, im interested to see how this was achieved

on

@Pixelshots, thanks for reading.

Google will be able to crawl and index only with hashbang, but then it shows the pages in their index without hashbang. You can check with the pages that you tested.

Analytics, pages cannot be tracked individually. But event tracking (or virtual page views) is to track this.

on

Appreciating for a nice article on Ajax crawling..

have a few questions…

in their document itself google saying that they will index the #! url instead of escaped fragment one, and i got succesful in doing this.

but you guys saying getting indexed without hashbang.. How?… through redirecting all urls with 301???

And waht about analytics. Can we get the site traffic normally tracked something like a pure html site?

hope you will answer…

on

Great examples Elan ! While doing such programs in ajax we need to make sure not just that hashtags are changing but accordingly contents are changed when clicking from SERPs. Need to program considering this in mind otherwise hash tags will be different but same contents. Also this applies only to Google.

on

Max, thanks for reading.

1) Definitely its going to be same for mobile devices.

2) It can be used in all scenarios wherever you deliver content with ajax.

on

First of all, Congrats Elan for completing the Ajax test and Thanks for this awesome post.

I really feel that Google’s proposal or should I say the “AJAX solution” is really promising for websites that has been suffering lack of visibility in Google search results. Since I am a rookie in SEO I have a couple of questions:

1. Will this proposal be effective for mobile devices as well?

2. What scenarios should we be using the “AJAX Solution” (is there any specific scenarios) ?

on

Google is really harnessing the power of Ajax for their Google Instant too… Elan your post is really eye opening in this regard on how to use the Ajax for an SEO Perspective ..